Testing Decision Science in NYC. Can you guess which worked best?

October 31st was an exciting day for the =mc consulting team, testing out various decision science principles on behalf of MSF/DWB with a brilliant group of young face-to-face canvassers from GiveBridge.

October 31st was also Halloween – a big thing in NYC – so we used some themed ideas connected to the festival. The purpose? =mc consulting is building up a bank of robust field experiments designed to test the direct impact of decision science on fundraising – building on the work in Change for Good.

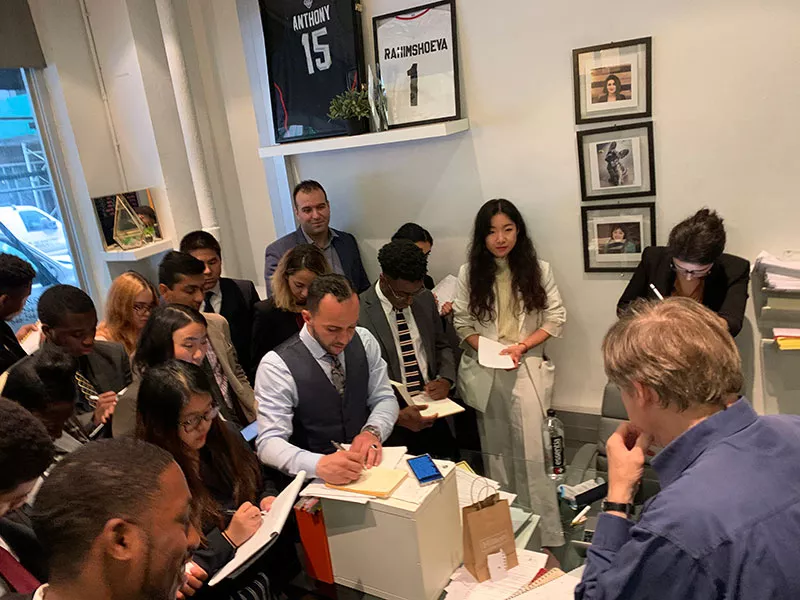

In the morning my colleague Alan Hudson and I offered training in Decision Science to the talented team of young smart canvassers. The team of 23 then split into four and headed for different parts of the city. Once on site they had to combine their normal successful F2F approach + one extra element from four experimental approaches to track the impact.

Bernard Ross training the Give Bridge team in decision science.

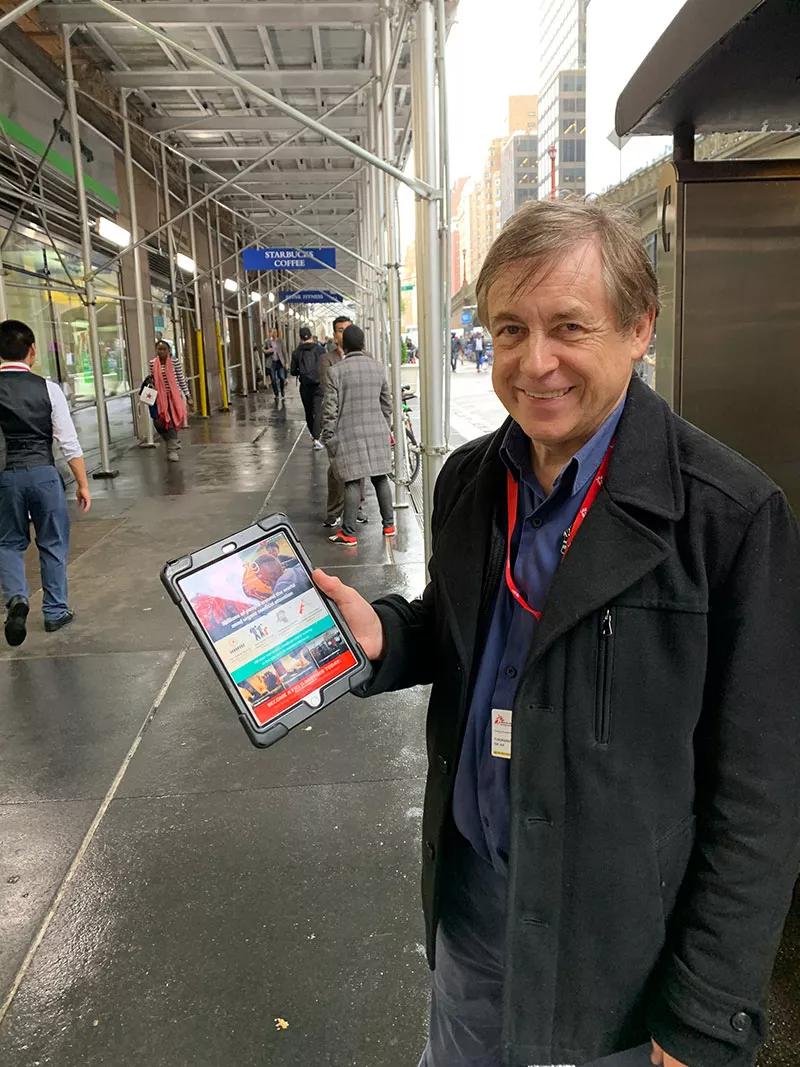

As serious researchers we joined one of the teams outside Grand Central Station and actually took part in the fundraising.

The four experiments, and the underlying principles we tested, are below

Experiment 1: Principle – Anchoring

The experiments in action – notice the 43 in the background – mentioned in the canvas conversation.

One team approached prospects and introduced a specific number into the conversation- either by standing near a sign with a number in it or by mentioning a number- e.g. “hooray it’s – 31st October Halloween.”

Idea is to see the impact of the ‘anchoring’ a number on average monthly gift among those who sign up. In the photo above we were using the street number – 43 – as an anchor.

Experiment 2: Principle – Reciprocity

Another team approached prospects and offered them a candy- a mini Mars bar-upfront, mentioning ‘trick or treat.’ The person can keep the candy regardless if they donate or not, but they obviously need to stop or at least pause to get it.

Here we were looking for the impact of Cialdini’s reciprocity: “I give you something, so you have to give me something back” We were looking at the impact on numbers of people giving.

Advertisement

Experiment 3: Principle – Normalisation

Team three were asked to mention to anyone who stopped how this street/location seemed “generous – lots of people stopping and donating.”

Here we’re exploring how to make stopping appear “normal”. Of course normally most people walk on past the canvassers. We were interested to see how normalisation affected sign ups.

Experiment 4: Principle – Salience/Agency

Last team were asked not to talk about ‘millions in need in Yemen’- t heir normal script- and instead focus on one child ‘Yusef’ and how $35 would help him get the drugs and treatment he urgently needed. (And how every month there was another Yusef in need.)

Exploring here how to make a huge crisis in Yemen seem solvable and concrete. NB. we were also testing having no picture of ‘Yusef’ to show – allowing prospect to create their own image.)

Bernard Ross practising what he preaches – actually street fundraising in NYC for Doctors without Borders… in the rain.

Can you predict the outcome?

The results are being processed now. (Donors have a cooling off period – so final results just coming in.) So watch out for a follow up in two weeks.

In the meantime a quiz for all you fundraisers whether a fan of decision science or not… We were obviously testing a number of things here – not all directly comparable. But here goes:

• which do you think had the biggest effect among Principles 2, 3 and 4 on numbers of sign-ups?

• and for Principle 1, what do you think was the average gift when 43 was used as an anchor?

Thanks to all who helped especially the great folks from GiveBridge. And super special thanks to Thomas Kurmann, Fundraising Director of Doctors without Borders USA, for making it happen.

Let me know if you want to know more.

And keep in touch with the world’s largest field experiment in fundraising in the arts through the decision science website

And to find out more about the principles involved try this download.

Bernard Ross is Director of =mc Consulting.